Recently we got access to a camera that records reasonable 4k video at 10bit and 12bit color depth. So naturally we now need a way to edit this 4k video. While proprietary software like DaVinci Resolve does have free variants that could be useful, those free variants often come with unfortunate restrictions like “no 4k exports” or “no commercial use” (sometimes with questionable definitions of “commercial”).

Warning: this post will not contain much information about editing at all, just a colorful recollection of all the problems had on the way to a 4k editing setup that works reasonably well. Software is a horrible mess most of the time. This will be quite a rambling. So sit comfortable, grab a soothing object and/or foodstuff of your choice, and prepare to be disappointed.

Requirements

Having the camera isn’t enough to make good videos. The camera is being had, but it’s not the camera that makes the videos–it’s the person wielding the camera. Which means that, if the person is not very experienced at camera-wieldage, has only one lens that introduces a lot of distortion into the image, and no stabilization gear except a tripod with a ball head, the videos just won’t come out that great.

To fix all of that the video editor of choice will have to at least make credible attempts at fixing each of those: lens distortion correction is a must, and camera stabilization is much appreciated if it exists. Can’t do much about not knowing what we’re doing, but that’ll come with time. (touch wood)

Humble beginnings

Running on Linux, the first choice for video editing was (of course) Kdenlive. Kdenlive does support lens distortion correction and camera stabilization with its many, many video filters. So that’s good.

Now Kdenlive hasn’t implemented these filters from scratch, it uses a filter framework called frei0r to do the heavy lifting. Sadly the frei0r lens correction filter uses a rather old quadratic algorithm that doesn’t reflect the real-world behavior of lenses very well, and it doesn’t do any interpolation either. Together these two mean that it can be impossible to set up the filter in such a way that distortion mostly vanishes without introducing a lot of discrete jumps at the borders of the image. So … Kdenlive lens correction isn’t really up to it, but maybe that’s not a big deal in all. Moving on.

Unfortunately stabilization doesn’t fare much better. There’s no way to set control points to stabilize on, instead the filter attempts to keep the image completely stable apart from some guesses at panning the camera operator may have done. For cameras that sit on a shaky tripod this works. For everything else it doesn’t.

Oh, and Kdenlive repeatedly crashes trying to edit 4k projects because it runs out of memory.

We’re going to need a bigger boaeditor.

Will it blend?

There are a lot of other video editors out there, even when excluding proprietary offerings as mentioned before. Flowblade and Shotcut use the same filter system Kdenlive uses, so they’re out. Pitivi can’t even load the files the camera creates. Cinelerra isn’t packaged on Arch and bothering with custom builds for a video editor is out of scope for now. It also seems to use ffmpeg for lens distortion correction, and ffmpeg uses the same (unsuitable) algorithm seen before. Others don’t even have distortion correction or stabilization.

That leaves … Blender.

Yup, Blender includes a video editor. The lens correction it does is pretty good, if a bit fiddly to configure. It uses a similar method to that found in cameras themselves and in photo processing suites like Darktable, i.e. once configured the results are very good. Stabilization exists as well, and can be tuned to within an inch of its life. Objects to stabilize on can be set, pan and zoom motions can be configured and will be rendered correctly. It’s Nice!™

So all is well now, right? Well. Spoiler alter: endless disappointment is the penance of the optimist.

Grade: Needs Improvement

So let’s load our clips into Blender, fix lens distortion, stabilize the camera, add some effects (bleh there’s not a lot of them, but it’ll do!), do some color grading with that nice 10 bit color depth on the input …

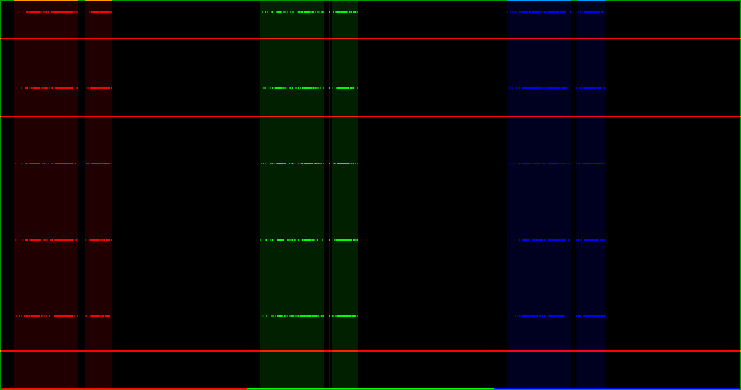

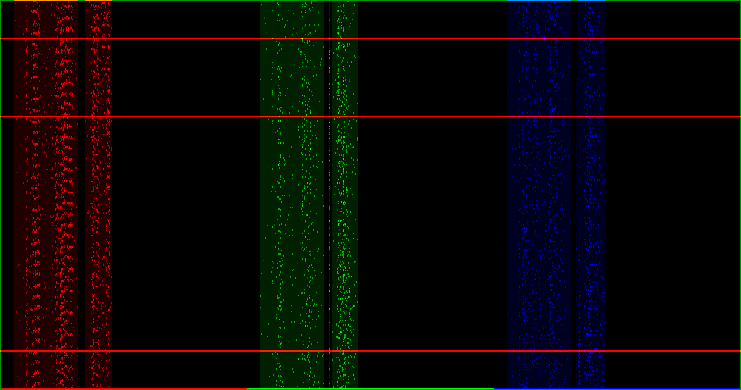

Oh. Oh no. Is that … banding, in the sky? 10 bit input should mean we can grade this pretty harshly and still not introduce any banding! Surely it can’t be. But let’s experiment, let’s expand the sky some more an see. Let’s take, oh, 2% of the color space and expand to 100%, on some part of the sky. We should see at least 20 different values of color now, with 10 bit inputs and using only 2% of them.

Except we don’t. We see five different values, not 20, which suggests that video is handled with 8 bit color instead of 10 bit. A quick chat with the developers confirms this: video is decoded with 8bit color regardless of what the input file is, and is likewise encoded at 8bit during rendering even when the rendered image exceeds that. Image sequences should work as expected though, the 8bitness of video seen here is just a limitation of the video path.

So let’s try that.

Convert the video from just now to an image sequence with ffmpeg -i input.mov '%08d.png', load it into blender as an image sequence instead of as a movie, and repeat the same process.

Aaaand–much better!

So converting videos to image sequences solves all the problems. Blender will process the sequences as expected, rendering to image sequences is fully supported, and ffmpeg can even turn an image sequence into a video. Sunshine and unicorns, right? Technically the problem is solved now.

But video compression exists for a reason

Unfortunately solving the problem has not made a problem go away, it has merely transformed one problem into another problem. That new problem is disk space, are more precisely, lack of sufficient thereof. At 4k resolution with 16 bit colors (because image formats don’t support 10 bit or 12 bit well) each frame is over 30 megabytes in size, even with maximum compression applied. That makes about one gigabyte per second of video, or around 3.5 terabytes per hour.

Editing large projects at these sizes requires a lot of disks, and that gets expensive very quickly. Disks also have limited read and write speeds, so even using very fast disks that can read and write at 200MB/s we won’t get any decent frame rate out of the system. Converting videos to images will also take quite a while, easily three times as long as the input videos would play for. And the rendered video will need as much space again.

This is what we in the industry call “a bit of a problem”. Luckily we are by now well versed in turning one problem into another problem, and there’s just the thing for that just on the doorstep.

On a short FUSE

That something is FUSE, a library that lets an application create a virtual file system. This virtual file system will look like the real thing for any application in the system–like, say, Blender. There’s also ffmpeg, a library that can read and decode many formats of multimedia files, including the 10 bit files written by the camera. Combining those two alleviates the need to store all decoded frames on disk–instead we’ll simply decode the frame Blender accesses when it accesses it, and when we’re done decoding we throw the decoded frame away. FUSE ensure that Blender gets the data, but the data is never stored anywhere except in system memory.

Perfect!

Except that FUSE is a C library. And ffmpeg is a C library. And for any language that’s reasonably fun to use at least one of them doesn’t have a proper binding. So this virtual file system will have to be written in C, or something much like it.

And what a rough C it be

It didn’t end up being C, obviously. C is a relic of a century long past and has no right to exist any more, and yet it carries on. That leaves only C++, which is its own kind of unfortunate. Not because C++ is a horrible language (even though it definitely is a horrible language), but because ffmpeg doesn’t have useful C++ bindings either except those that have long since been abandoned, or those that only work on Windows. Which means writing a binding from scratch, and luckily it doesn’t have to be complete by any means. Only just enough to open a video, grab some frames from it, and convert them to some image format.

That should be easy!

17th century architecture

It wasn’t easy. Resource management in ffmpeg is too complicated to go into here, but suffice it to say that it is even more baroque than resource management in C usually is. And resource management in C is the kind of baroque that turns little mistakes like forgetting to add one to a value in the program into problems like ransomware.

It was not fun, but eventually it did work correctly.

Finding frames

Two properties video players usually have that this file system will lack are linearity and seek inaccuracy. Video players usually play a video from start to finish without jumping around in it. This file system will jump around all the time to provide just the frame some application has asked for. Video players have fast-forward and rewind buttons that skip forward or back by some number of seconds, but usually not the exact number of seconds written on the button. This file system will have to jump around to the exact frame an application asks for.

Now video codecs are usually designed to suit video players that will simply play a video, and when they do seek they don’t need to hit an exact frame. Just something close to the frame they went for. (There are codecs are designed to make such exact seeks easy, but we’ll not discuss them here.)

The reason for this is the same reason that makes video files much smaller than sequences of still images: video codecs don’t compress each frame of the video individually as still images. Instead they compress a few frames as still images (maybe one every few seconds). For the following frames they try to predict how previous frames would change, and only store whatever information is needed to fix up anything they predicted incorrectly. Since video often moves, and seldom moves very fast or in many different directions at once, this approach works very well. It also means that a decoder can only jump to individually compressed frames, not into the much smaller predicted frames–if you’ve ever seen video artifacts like objects melting into a blocky mess, that’s what happens when a decoder seeks into predicted frames without having the reference image.

Luckily this can worked around by seeking to the nearest possible frame, then dropping all frames until the requested frame comes around!

This ain’t my first time

Many video formats store not just individual frames, but also the time at which each frame should appear on screen. (A notable exception to this seems to be AVI, which doesn’t store such timestamps. Why.)

Seeking to a specific frame should then be no more complicated than calculating the timestamp of that frame, seeking to the nearest frame we can find, and then decode frames until we arrive at the timestamp we just calculated. Usually this will work, but (unbeknownst to many) video files do not have a fixed frame rate. In many video formats it is perfectly legal to state that a video runs at 30 frames per second, but this specific frame here will stay on screen for 2.1 seconds before the next frame is shown. In this case we could return the same frame 63 times, which is easy enough.

It is however also possible to show a frame for far less than one normal frame duration. Naturally this makes seeking to an exact frame rather difficult, since now we may have more than one image in the time of one frame.

Easy enough to just forbid that and report an error when an instance of such an unexpected jump in time is found, so on we go to convert the frames we find to images and return them.

Spitting images

Fortunately ffmpeg cannot only decode videos, but encode them as well. Some of these options are, as mentioned earlier, sequences of PNG or TIF files.

Unfortunately ffmpeg cannot export uncompressed PNG images (but compressing them would be a waste of time here), and the TIF exporter is very slow even when exporting to uncompressed images. Copying the exported image is three times as fast as creating that image in the first place, even though the image contains only a few hundred bytes of metadata followed by the raw data of the frame. It really shouldn’t take that long to create that image, in fact it should be nearly free!

Which (of course) means that a custom TIF exporter is necessary. Writing one is easy enough, the TIFF specification is freely available and creating TIF files is not complicated for the purposes found here.

Okay, now we have access to high bit-depth images in blender, and we can edit! Finally!

… But it’s slow. Like, really slow. Only four frames per second on this machine, at best.

This doesn’t look right

For cases like these Blender supports something called proxies. Instead of working with the full input data all the time it’ll use a much smaller proxy file with lower resolution, enabling real-time playback and editing of the project even when the machine can’t handle the full input at that rate. For videos the proxy file would be video encoded at lower resolution, for image sequences (like created here) the proxy is a folder with JPG images crated at lower resolution and lower quality.

The file system has to generate these folders, and when Blender reads from these folders it’ll have to take the frames from a previously created proxy clip instead. That’s easy enough, it’s just more of the same.

Except … For image sequences blender requires at least two proxy folders, one for the main video editor and one for the part that does image stabilization. (Remember that? That was important before we got sidetracked 20 levels deep.) But well, it’s still more of the same.

Except … Blender does not deal well when the proxy images it reads aren’t 8 bit color. Color management goes off the rails (everything turns very dark suddenly), and it’s not much faster than reading the full input file directly either. That’s easy enough to fix at least, just encode the proxy clips with 8 bit colors instead of 10 or 12 and return them appropriately.

Except … So far these precreated proxies are for the videos from the camera, but maybe there’s been some lens distortion correction applied (remember that?). For these corrected clips Blender supports proxies as well, and they live in the virtual file system we’re building … But Blender can’t write to that, because what would that even mean? That’s easy enough to fix at least, we’ll just put a symbolic link where Blender would put the folder, and redirect it to where our precreated proxies live. It’s not very efficient like that, but proxies are small enough that it’s no big deal.

Except …

Except nothing. That’s it! It’s done! It finally works!

We can do 4k editing, with proper color grading, without unsightly artifacts, in real-time. The adventure is over!

Well, mostly. But that’s a story for another time.